No. We provide the raw, high-performance bare metal infrastructure. You have full root access to install and manage your own K8s distribution (like Rancher, Talos, or Vanilla K8s). This ensures you have 100% control and zero management markups.

Yes. Our hardware supports IOMMU (PCI Passthrough). You can pass physical GPU resources directly to your Docker or Podman containers, which is critical for real-time AI inference and LLM workloads without virtualization lag.

In a microservices architecture, services constantly communicate. A 100Gbps backbone ensures that "East-West" traffic (internal communication between containers) happens with sub-millisecond latency, preventing network bottlenecks during traffic surges.

Absolutely. You can use our local NVMe RAID-10 arrays as high-speed persistent volumes for your databases (MySQL, MongoDB) running inside containers. By using the Local Path Provisioner, you ensure data remains safe even if a container restarts, with up to 1 Million IOPS performance.

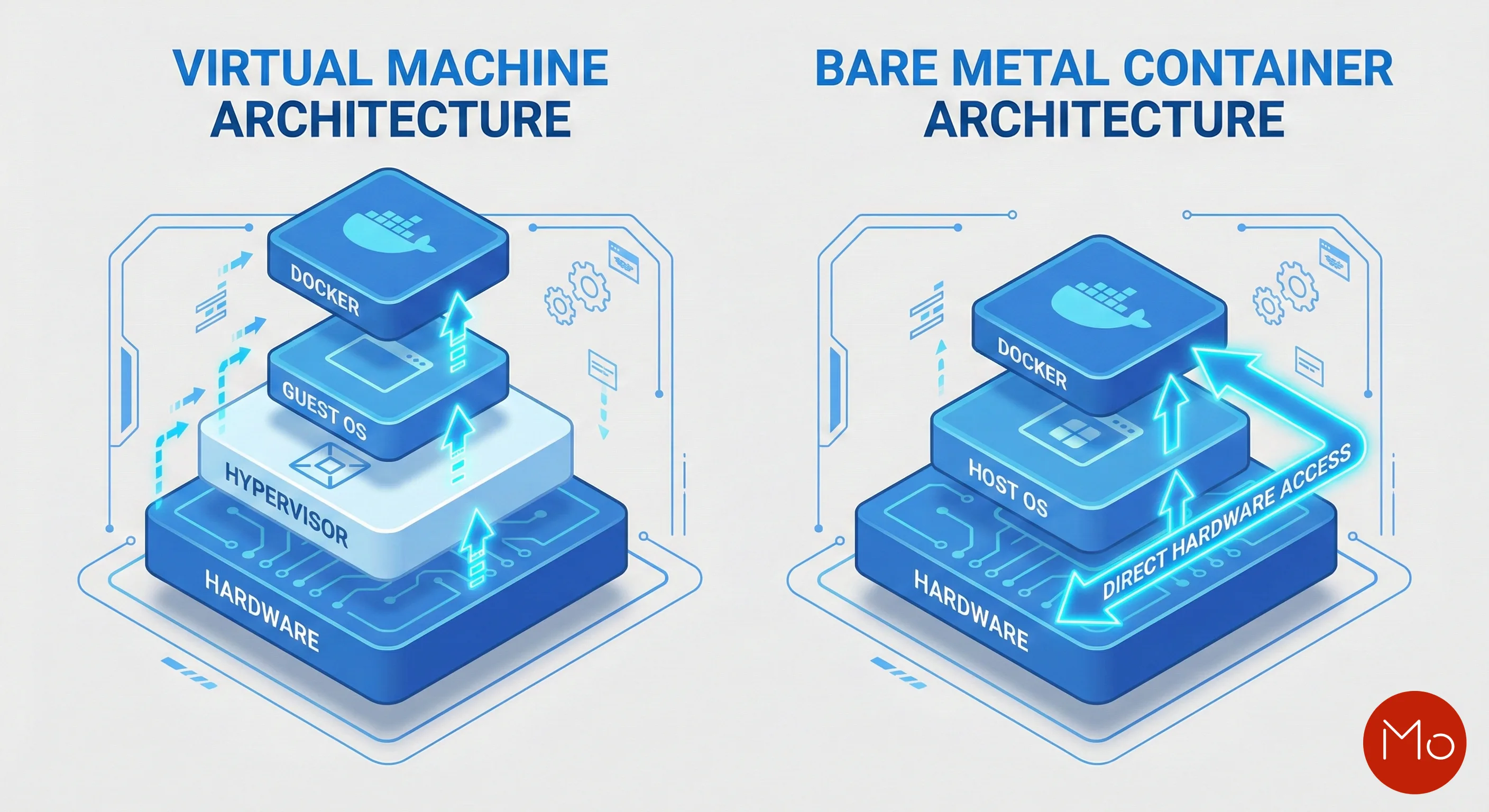

Bare Metal removes the Hypervisor layer, eliminating the 15-20% "Virtualization Tax". Your containers get direct-to-silicon access to physical CPU cores and RAM, which prevents "CPU Stealing" and ensures consistent performance during peak loads.

On Bare Metal, you have Physical Single-Tenancy. Unlike public clouds, you don't share the CPU cache or L3 cache with other customers, effectively mitigating Side-Channel attacks (like Spectre/Meltdown). You have full control to harden your kernel with AppArmor or SE-Linux profiles.

Yes. Our infrastructure allows you to bypass the standard bridge networking and use SR-IOV or direct L2 networking. This gives your containerized applications near-native network performance, essential for high-frequency trading or real-time VoIP microservices.

The "I/O Blender Effect" occurs in shared clouds when 100+ VMs fight for the same disk bandwidth. We solve this with Direct-Attached Gen4 NVMe storage. Your IOPS are guaranteed because the hardware is not shared, ensuring zero-lag for database-heavy containers.

Testing shows that running GitLab Runners or Jenkins on Bare Metal with NVMe results in a 40% reduction in build times. The lack of virtualization overhead means code compilation and image layering happen at the maximum possible speed of the hardware.

Yes. You can link multiple servers across our global locations using 10Gbps or 100Gbps private uplinks. This allows you to build High-Availability (HA) Kubernetes clusters with dedicated master, worker, and etcd nodes for enterprise-grade resilience.