We are witnessing a monumental pivot in enterprise IT architecture. In 2026, the global demand for AI-related power is projected to hit an astounding 44 Gigawatts (GW), surpassing standard data center operations for the first time in history. This massive scale has exposed the critical flaws of shared infrastructure.

Enterprises are realizing that standard Virtual Private Servers (VPS) and shared cloud instances simply cannot handle continuous, hyper-dense workloads. The solution? A massive migration toward Single-Tenant Bare Metal Servers located in top-tier North American facilities.

The Hidden Enemy: The "Hypervisor Tax"

Why are companies abandoning the convenience of AWS or Azure for heavy AI inference? The answer lies in the Hypervisor Tax.

In a public cloud, a virtualization layer (the hypervisor) sits between your application and the physical hardware. For standard web hosting, this is fine. But for AI models that require maxing out PCIe Gen 5 data transfers to feed GPUs, the hypervisor acts as a severe bottleneck, stripping away 10% to 15% of raw performance.

Bare Metal eliminates this. You get direct, unshared, root-level access to the CPU, RAM, and GPUs. Zero overhead. Maximum throughput.

The 2026 Market Reality: USA Dominates Infrastructure

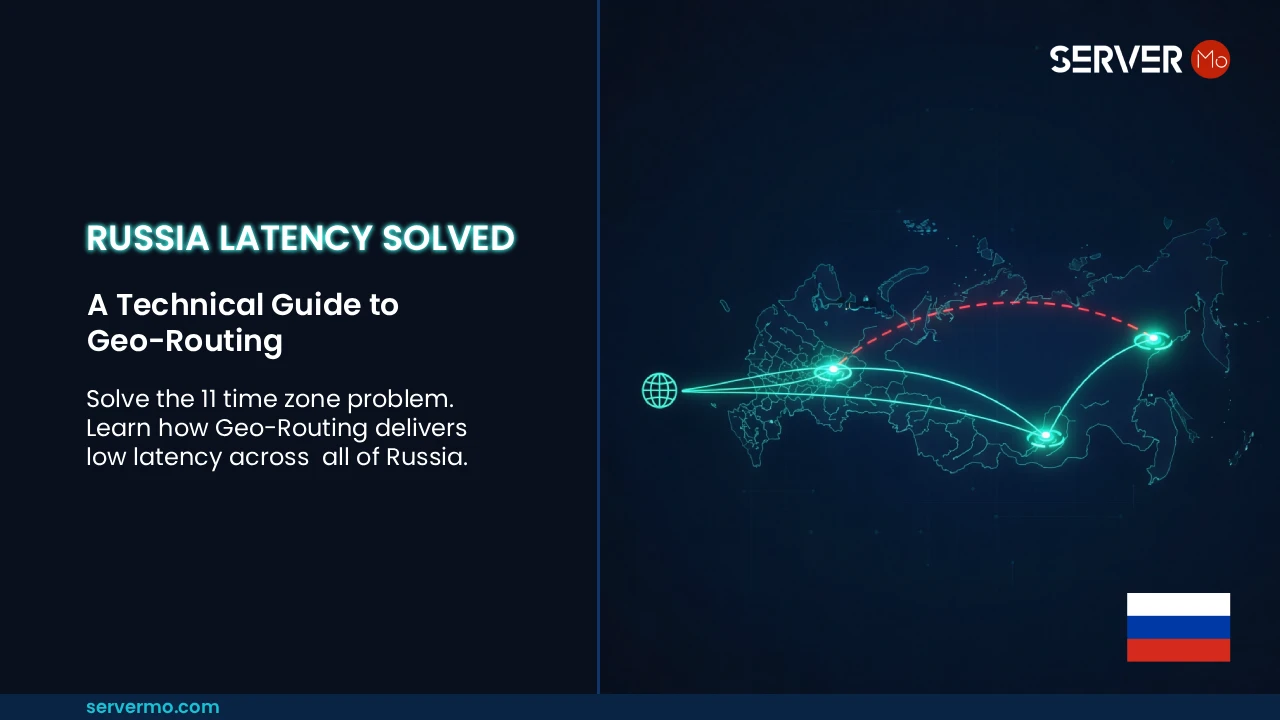

You cannot talk about global AI infrastructure without talking about the United States. As of Q1 2026, the USA houses over 4,011 active data centers, making it the undisputed capital of raw compute power. To support extreme scaling across this massive grid, providers like ServerMO have expanded to offer enterprise-grade bare metal access across over 100 US locations. From major tech hubs like Silicon Valley, Ashburn (Virginia), Dallas, and New York City, to strategic edge locations in Ohio, Oregon, and Florida, your data is always perfectly routed.

| 2026 Market Metric | Cloud / Shared VM | US Bare Metal (Dedicated) |

|---|---|---|

| Compute Access | Shared / Throttled | 100% Dedicated (Single-Tenant) |

| Hardware Level Control | No access to BIOS/Firmware | Full IPMI / Root Access |

| Network Latency (Americas) | Variable (Noisy Neighbors) | Sub-10ms across 100+ US locations |

| Data Egress Fees | High ($0.09 per GB) | 1Gbps to 100Gbps Unmetered Standard |

Hardware Deep Dive: Blackwell, H100 & 100kW Racks

Hardware is evolving faster than traditional server rooms can handle. Modern setups utilizing top-tier processors like Dual AMD EPYC, Intel Xeon Scalable, and Intel Core i9 alongside massive GPU arrays are pushing rack power densities toward the 100kW mark.

To stay ahead of the AI curve, enterprises require access to the absolute best new tech. This means moving beyond standard graphics cards to deploy the latest NVIDIA RTX PRO 6000 Blackwell GPUs, H100, A100, RTX 6000 Ada Lovelace, and high-memory RTX 4090s. This level of processing power, combined with high-speed Unmetered ports (from 10Gbps up to an incredible 100Gbps), makes true real-time AI inference a reality.

Cooling this immense hardware requires more than standard HVAC. As rack densities hit the 100kW mark, advanced Direct Liquid Cooling (DLC) becomes mandatory. Furthermore, 2026 brings Compute Express Link (CXL) technology, allowing ultra-fast memory pooling across Bare Metal environments—features that are incredibly complex and inefficient to implement in shared virtual clouds.

Future-Proofing Your

Infrastructure

As hyperscale data centers expand, shared cloud environments simply cannot keep up with continuous, intensive processing. To future-proof your infrastructure with predictable costs and zero hypervisor overhead, deploying on our high-performance USA dedicated servers ensures you get 100% of the raw compute power. With access to the latest Blackwell GPUs and up to 100Gbps unmetered bandwidth across 100+ American facilities, your AI applications will never bottleneck again.

The Economic Verdict: Predictable TCO

The global Bare Metal market is forecast to reach $78.79 Billion by 2034. The primary driver? Total Cost of Ownership (TCO). Cloud computing offers agility for startups, but as your workloads mature, the unpredictable bandwidth egress fees and compute costs become paralyzing. By migrating to a US-based Bare Metal architecture, enterprises are locking in flat-rate pricing while unlocking bleeding-edge AI hardware.

Technical FAQ: Bare Metal vs Cloud

Public clouds impose a "Hypervisor Tax"—a virtualization layer that bottlenecks PCIe Gen 5 data transfers by up to 15%. Bare metal servers provide direct root access to GPUs and CPUs, essential for continuous, high-density AI inference.

The USA dominates global infrastructure. Providers like ServerMO offer bare metal access across over 100 US locations, including tech hubs like Silicon Valley and Ashburn, providing unmatched sub-10ms latency, massive power grids, and advanced cooling for high-density racks.

The Hypervisor Tax refers to the performance loss caused by the virtual machine software running on public clouds. For compute-heavy AI models, this overhead causes latency, which is entirely eliminated when using single-tenant Bare Metal servers.