When discussing AI infrastructure, the conversation almost exclusively revolves around single-node optimization NVLink bandwidth, PCIe lanes, and GPU VRAM. While optimizing a single box is necessary, it completely misses the reality of 2026: Scaling AI is fundamentally a Distributed Systems problem.

An autonomous AI Agent doesn't just generate text; it operates in a continuous, recursive loop (Think → Query Vector DB → Call External API → Evaluate). When you scale from one agent to thousands, the bottleneck shifts from the GPU to network Round Trip Time (RTT), queueing dynamics, and distributed tracing. Let's examine the brutal realities of scaling agentic architectures.

The Networking Bottleneck: The N+1 Tool Calling Problem

There is a misconception that data serialization (parsing JSON payloads) is a primary bottleneck in AI networks. The truth is, modern enterprise CPUs parse JSON in microseconds. The real networking killer is the Sequential Tool Calling (N+1) Problem.

An AI agent often needs the result of API Call A before it can formulate API Call B. If your agent makes 10 sequential calls to a third-party service, and your network latency is 80ms, you have just introduced 800ms of pure dead time into your execution loop. During this time, your expensive GPUs are sitting completely idle, waiting on the network.

Network Colocation: The Physics of API Proximity

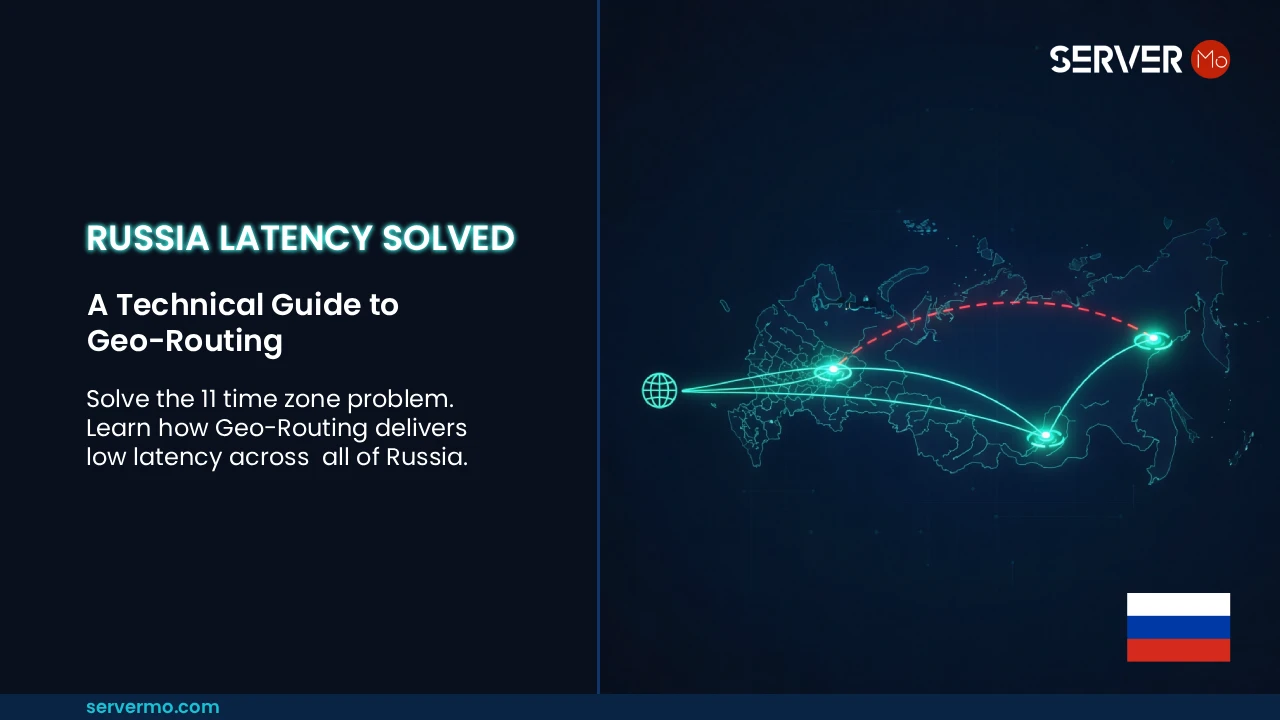

How do you solve this RTT bottleneck? By respecting the speed of light. The majority of enterprise SaaS platforms and APIs host their core ingress points on the US Internet Backbone.

If your AI infrastructure is hosted in a remote location or overseas, your agent's recursive loop will be severely throttled. This is why Network Colocation (API Proximity) dictates physical deployment. ServerMO doesn't just offer generic "USA Servers"; our footprint covers the exact epicenters of global data traffic, including Ashburn (Virginia), Silicon Valley (California), Washington DC, Dallas, and New York.

By deploying your Bare Metal inference nodes in locations like Ashburn (the data center capital of the world), you collapse transatlantic API round-trip latency from 100ms+ down to a localized 1-5ms. This physical proximity fundamentally accelerates the agent's multi-step loop.

| System Bottleneck | Naive Architecture | Production Distributed System |

|---|---|---|

| Tool Call Latency | Geographically distant (80ms+ RTT) | API Proximity (Ashburn/SV Colocation) |

| Load Management | Synchronous blocking calls | Kafka/NATS async queues & Backpressure |

| Multi-node Scaling | Replicating full models | Tensor Parallelism & Data Sharding |

| Tracing (O11y) | Cloud-metered log exports | Unmetered eBPF & OpenTelemetry |

Queueing Theory: Handling the Load

Inference at scale is governed by Queueing Theory. LLM generation is heavily compute-bound. When concurrent requests spike, they form a queue. If the arrival rate of requests exceeds the processing rate, tail latency explodes exponentially, leading to system timeouts.

You cannot simply "add more GPUs" to fix a queueing collapse. Resilient AI systems require strict architectural controls: Backpressure Handling to cleanly reject requests when saturated, Asynchronous Pipelines (using Kafka) to decouple request intake from execution, and continuous batching frameworks like vLLM to optimize the GPU workload dynamically.

The Economics of OpenTelemetry

When a multi-agent recursive loop slows down, finding the root cause requires comprehensive distributed tracing via OpenTelemetry. While Observability (O11y) is mandatory in both Cloud and Bare Metal, the economics differ vastly. Exporting terabytes of trace data from public clouds incurs massive egress fees (the "log tax"). Deploying on ServerMO Bare Metal provides unmetered bandwidth, allowing you to run exhaustive monitoring stacks, plus root access to utilize eBPF for deep kernel network tracing, without inflating your monthly OpEx.

Conclusion: Architecture Above All

Building a reliable, multi-agent AI system is brutally difficult. It requires mastering distributed architecture, queueing theory, and understanding network API proximity. Hardware is merely the foundation; the software and network topology dictate your success.

Public clouds offer heavily managed services that abstract away this complexity, making them excellent for rapid iteration. Conversely, Bare Metal clusters offer raw economic efficiency, predictable routing, and superior API colocation options for sustained inference provided your DevOps team is equipped to handle the operational burden.

If your engineering team is ready to architect these distributed systems, infrastructure providers like ServerMO supply the unmetered, high-power compute nodes across major US hubs required to bring them to life.

Technical FAQ: Distributed AI Systems

The transition from single-node instances to distributed systems. At scale, the challenges shift from GPU VRAM limits to queueing theory, sequential API calling latency (the N+1 problem), and network colocation.

AI agents execute recursive loops that constantly query external enterprise APIs. Colocating agent infrastructure in major data center hubs like Ashburn, VA minimizes Round Trip Time (RTT), preventing the GPU from sitting idle.

While Observability is required everywhere, public clouds charge high egress fees to export terabytes of log and trace data. Bare metal servers with unmetered bandwidth allow you to run heavy OpenTelemetry stacks without paying a 'log tax', plus they offer root eBPF access for deep kernel tracing.